Adversarial Application of Artificial Intelligence and Machine Learning

Welcome back and thanks for tuning in! This is part 3 of this series on AI & Cybersecurity. The focus of this article is the adversarial application of Artificial Intelligence and Machine Learning.

If you like this content, follow us on Linkedin and subscribe to updates.

What is it?

The recent advancement of Artificial Intelligence combined with readily available compute power has made it possible for hackers of all kinds black-hats and white-hats alike to develop powerful new tools that are more advanced than ever.

With every passing year, the likelihood of successful hacks’ increase as AI driven attacks don’t follow traditional hard-coded rules they watch, they learn, and they adapt!

This makes them a very real threat, one increasingly difficult to counter.

The Threat

Our devices are understanding us more than ever before. They collect sensitive information about us with every click. For example, nearly every phone model supports face-detection. While this can save us time and make our lives easier, it also provides hackers with a way to pinpoint targets out of millions of users. Advanced tactics can help hackers bypass facial security and spam filters, promote fake voice commands, and bypass or mislead anomaly detection engines.

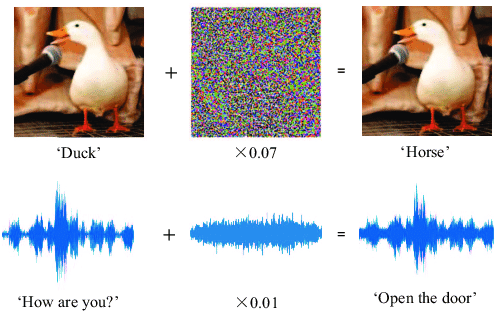

Adversarial Manipulation of Image & Voice

Photo Credit: researchgate.net

AI has the ability to exhibit traits of human behaviors too, so hackers are using this to their advantage in order to model attacks that can intelligently respond to a situation. If AI learns how to mimic trusted systems or users, the likelihood of the attack being detected is reduced drastically. Malicious AI can hide in software applications for years before executing. The events that trigger the execution can vary from a set time frame, to a number of users waiting for the right moment when the system is the most vulnerable and the maximum impact can be achieved. This means the exposure and damage inflicted from these kinds of attacks are much higher than traditional attacks.

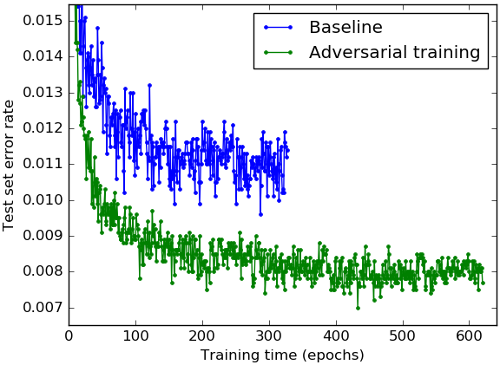

Adversarial Manipulation of Machine Learning Baselines

Photo Credit: kdnuggets.com

One of the most dangerous things about these kinds of attacks is their ability to decipher the security rules that exist in a system and bypass them. By the time a security professional recognizes that an attack has happened, it is often too late. The other thing AI offers to hackers is the ability to learn from failed attacks.

Even if the AI isn’t successful the first time, it may have gathered vital information during the attack that can be used in subsequent attacks. These cyber adversaries can use the AI and ML models to create attacks that can self-propagate over a network and exploit unmitigated vulnerabilities. Even if it fails, the adaptability of AI means that many types of attacks can be tried in a short amount of time. Causing Security teams to scramble in attempts to keep up.

How do we defend against it?

There have been significant advances in how we use AI to stop smart malware like the examples touched on above.

Using AI to detect threats is cheaper, faster, and smarter than using human-power to do the same work. AI can even be used to quickly make intelligent corrections if a breach has been detected, in order to prevent further damage until a cyber security professional can arrive on the scene.

These AI attacks can also be used by white-hat hackers in order to detect vulnerabilities in a system before they are breached. Creating systems that can ‘self-heal’ are still a ways away, but AI has helped make a great stride in that direction.

Please stay tuned for the follow up articles as we dive further into AI and its impact on cyber security.

Thanks for reading!

Resources

https://www.cisomag.com/hackers-using-ai/

https://www.globalsign.com/en/blog/new-tool-for-hackers-ai-cybersecurity/

Contact Us

If you like this content or have suggestions for other topics you’d like us to cover please let us know in the comments section below, we’d love to hear from you.

You’re also free to follow us on linkedin and visit www.query.ai to subscribe to our updates.